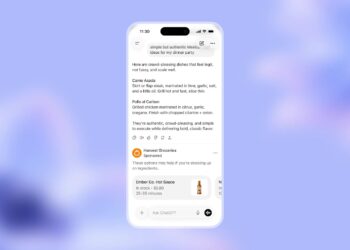

DALL·E 3, has been integrated into ChatGPT, offering Plus and Enterprise users the ability to convert conversations into visuals.

Simply describe a concept, and ChatGPT, leveraging DALL·E 3, will produce a range of images based on that description. Users can even request modifications to these visuals directly in their conversation.

To ensure the safety and appropriateness of generated content, a comprehensive safety mechanism is in place for DALL·E 3. This system curtails the production of images that might be considered harmful or offensive, like those depicting violence, adult themes, or hate.

The process involves safety evaluations on both user prompts and the resultant images. Early user feedback and expert evaluations were crucial in pinpointing potential safety concerns, especially with misleading or graphic content.

Another significant move during the preparation of DALL·E 3 for widespread use was its refinement to minimize the chances of creating content resembling the styles of existing artists or unauthorized images of public figures.

The model also strives for improved demographic inclusivity in its image outputs. For those keen on delving deeper into DALL·E 3’s deployment preparations, more details are available on the DALL·E 3 system card.

In the fast-evolving landscape of AI-generated imagery, safety and ethical considerations remain paramount. With DALL·E 3’s integration into ChatGPT, users can enjoy enhanced visual capabilities while being assured of the model’s commitment to safety and respect for artistic integrity.