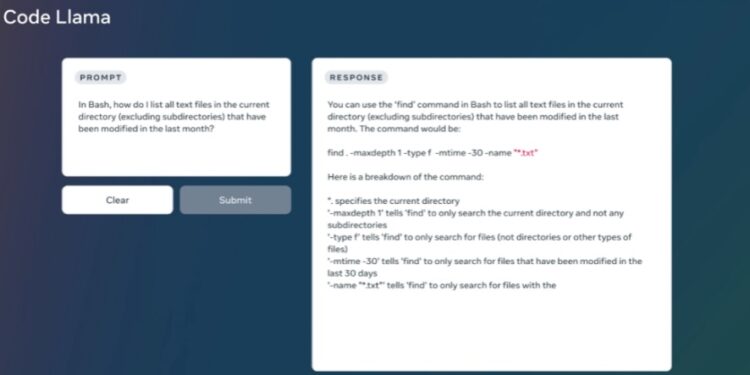

The tech industry witnessed a significant leap with the introduction of Code Llama, an advanced Language Learning Model (LLM) tailored for generating, discussing, and assisting with coding tasks. This new model promises not only to streamline the workflow for experienced developers but also to simplify the learning curve for coding newcomers.

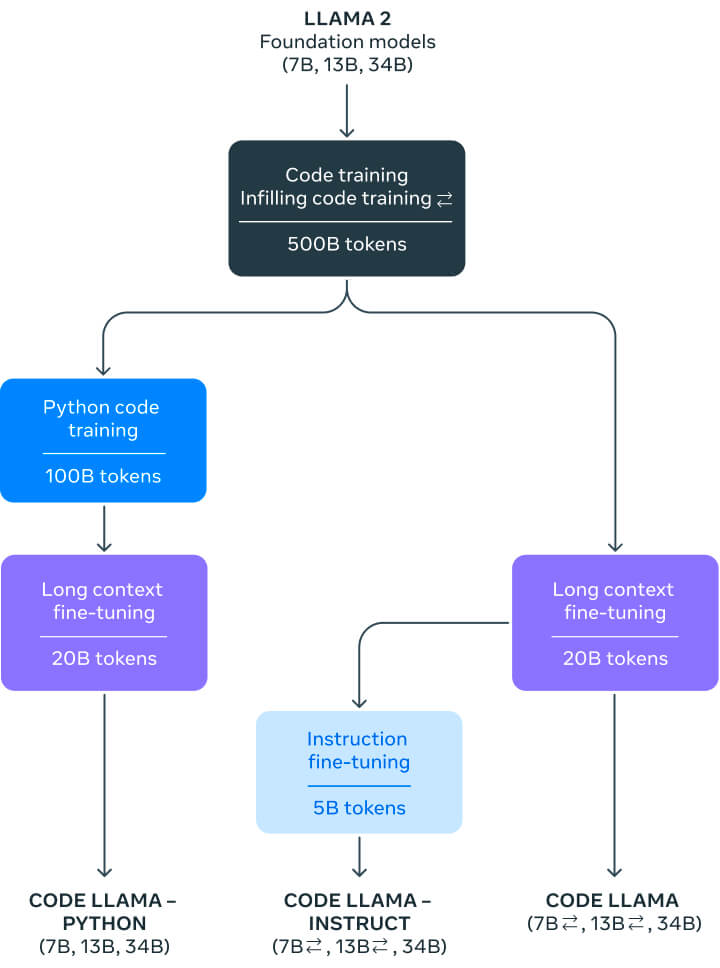

Derived from the renowned Llama 2 model, Code Llama has undergone extensive training on code-focused datasets, enhancing its coding capabilities manifold.

Key Features:

- Dual-Mode Operation: Code Llama can respond to both code and natural language prompts, showcasing versatility in tasks from code generation (e.g., producing the Fibonacci sequence) to debugging.

- Multilingual Support: It proudly caters to various modern programming languages including, but not limited to, Python, C++, Java, and Typescript (Javascript).

Available Variants:

- Three Sizes for Diverse Needs: Code Llama will be available in three sizes – 7B, 13B, and 34B parameters, each built on 500B tokens of code data. While the 34B model offers superior results, the 7B and 13B models prioritize speed, ideal for real-time code completion tasks.

- Python-Specific Variant: Recognizing Python’s stature in the AI landscape, the Code Llama – Python model has been fine-tuned with 100B tokens of Python code to provide specialized support.

- Instruct Model for Intuitive Assistance: Code Llama – Instruct is a unique variation optimized for natural language instructions, ensuring users receive meaningful and secure outputs.

Beyond mere code creation, Code Llama’s mission is to alleviate developers from repetitive tasks, allowing more time for innovative, human-centered endeavors.

Reiterating a commitment to open AI development, Code Llama is being launched under the same community license as Llama 2. This approach encourages community-driven evaluation and innovation, aiming for safer and more responsible AI advancements.

The release of Code Llama signifies a profound stride in the realm of AI-assisted software development, with potential applications spanning diverse sectors. As the tech community anticipates its widespread adoption, many are eager to see how Code Llama will reshape coding’s future landscape.